Why Are Old Photos Sepia-Toned?

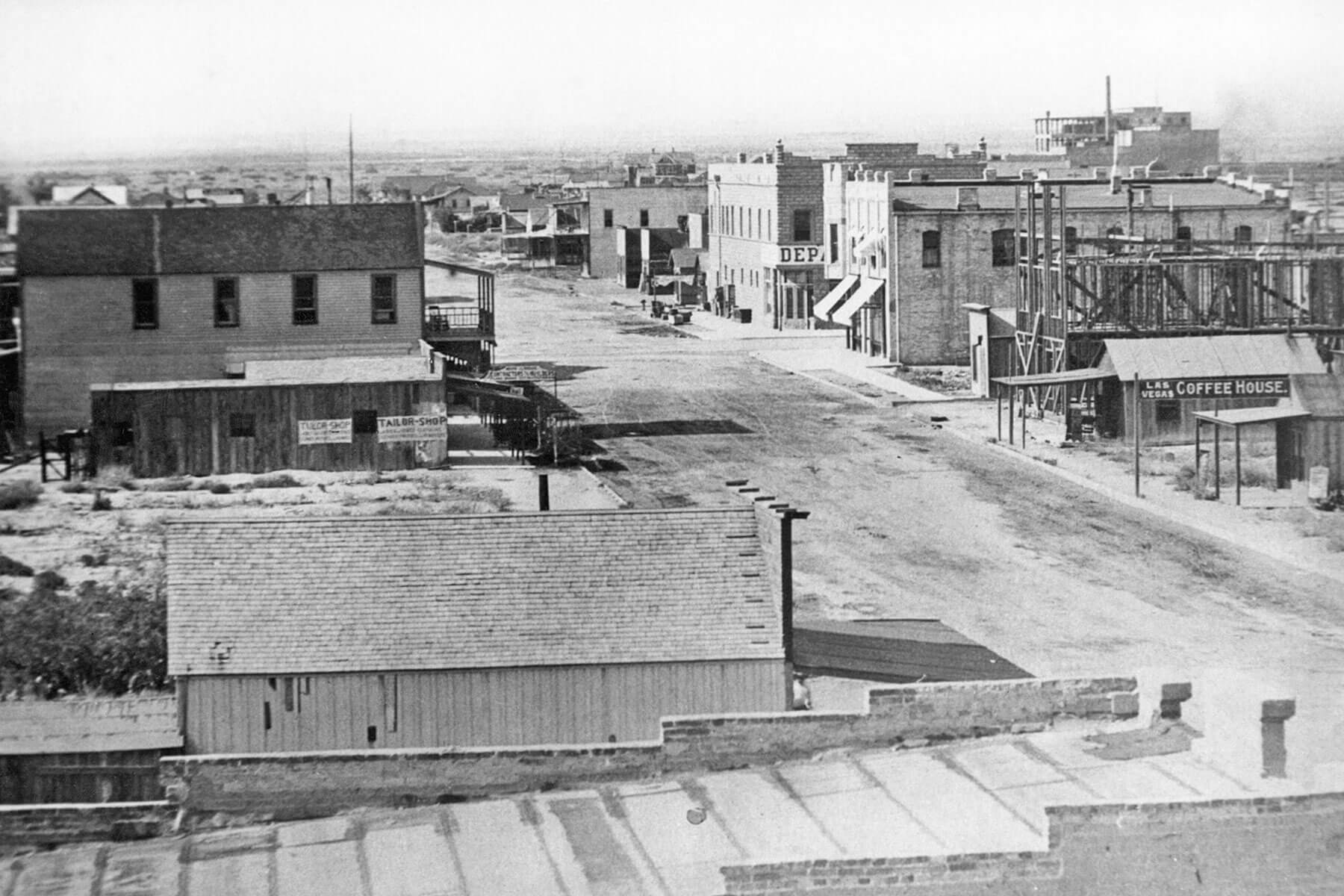

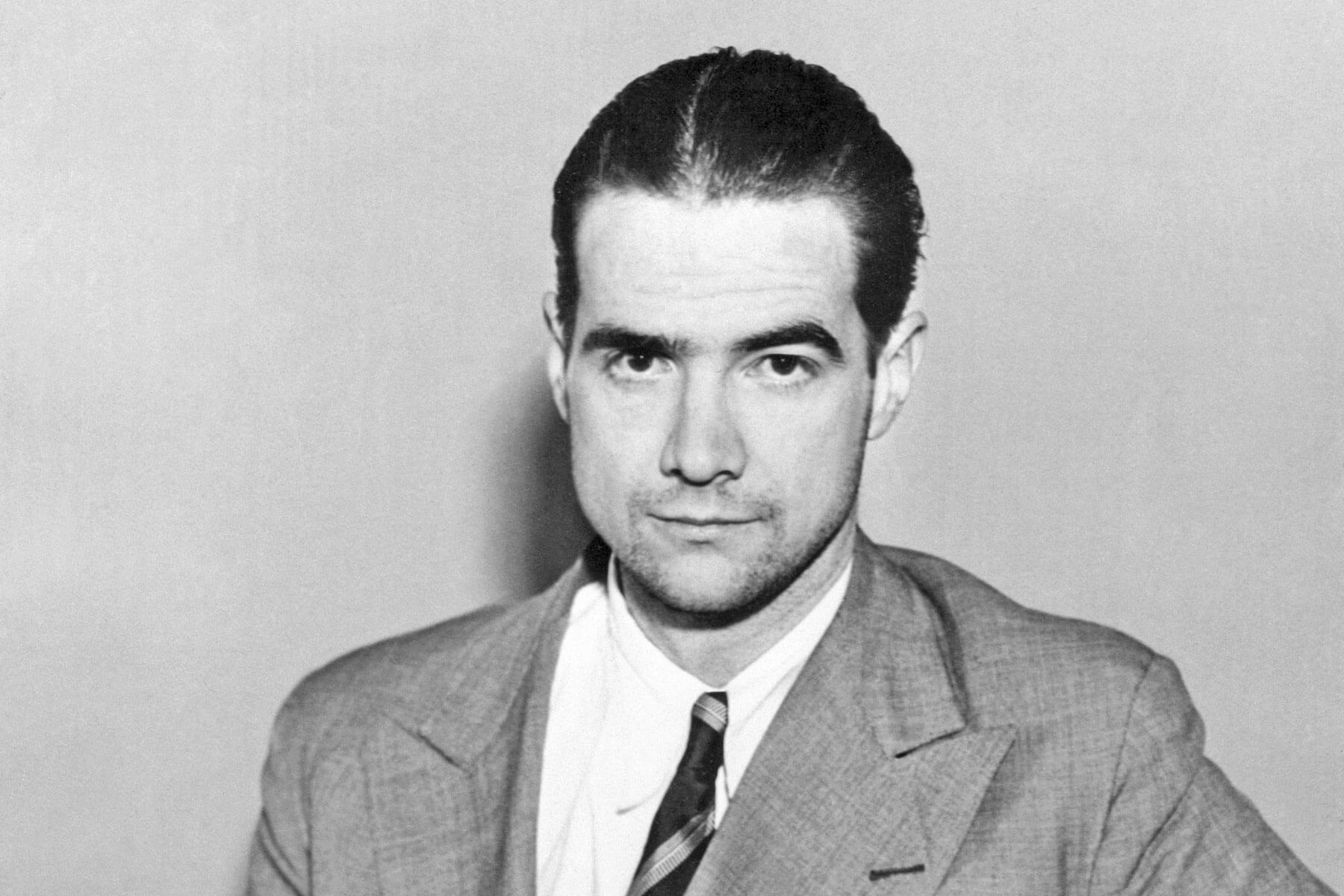

Developed in the late 1820s, photography revolutionized the way history could be documented, blending art and science to create lasting visual records. Early photographs were exclusively black and white, featuring stark, contrast-heavy images that showcased the technical brilliance of the new medium. By the 1880s, however, photographs began taking on a warm, brownish tint. This distinctive aesthetic, known as sepia toning, became a hallmark of photography, particularly portraiture, around the turn of the 20th century.

Sepia-toned photography was not just an aesthetic preference, but a direct result of technological advancements aimed at improving the longevity and visual quality of photographs. As pioneers in the field experimented with ways to improve the durability of their images, sepia toning emerged as a practical and widely adopted solution. The process extended the lifespan of photographs, preventing fading and deterioration over time. As a result, sepia-toned prints dominated photography for several decades.

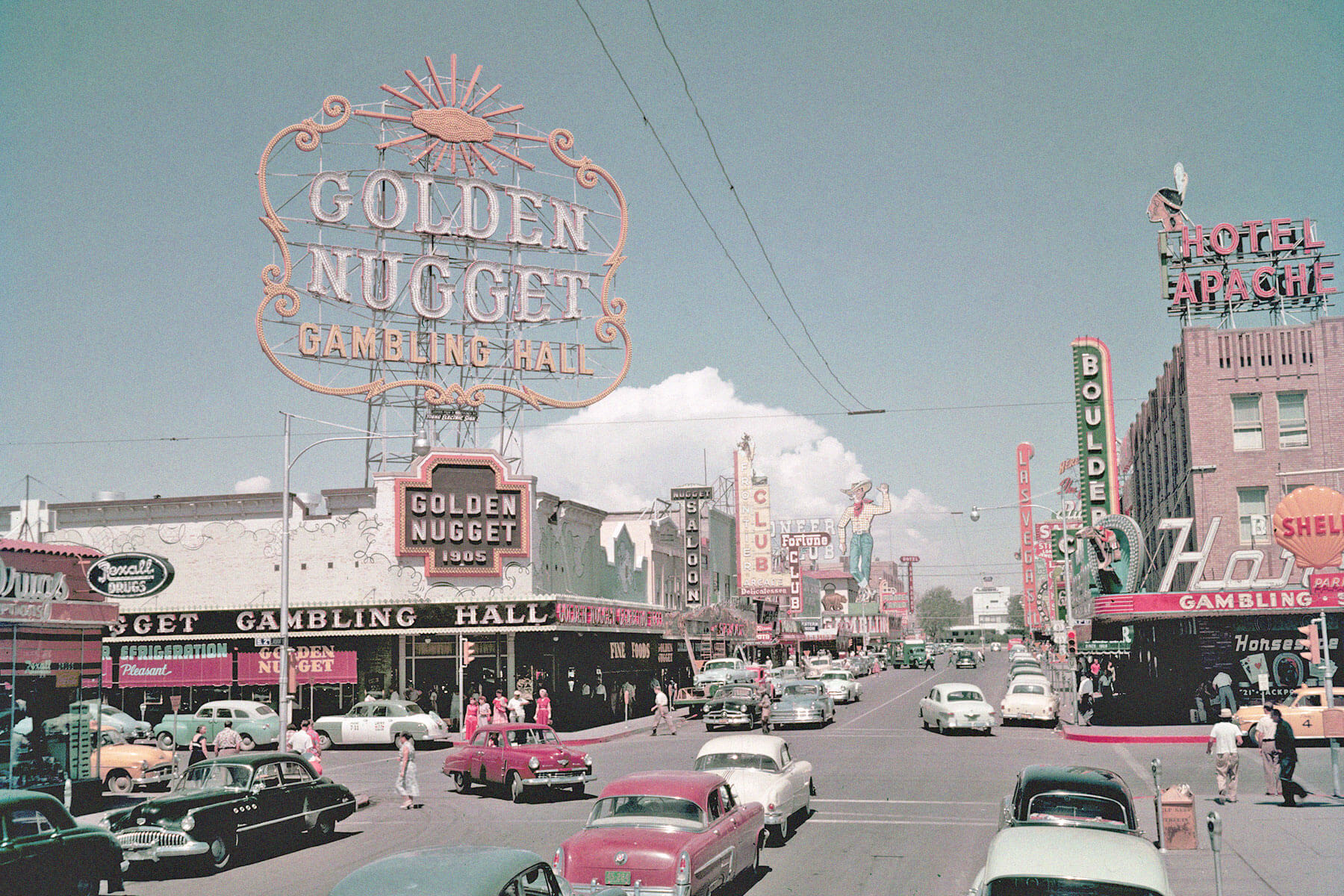

Despite their brownish hues, these photographs are still considered a form of black-and-white photography. While the sepia toning process adds warmth to the monochromatic image, it doesn’t technically introduce additional colors.

Sepia-Toning Was Used To Preserve Photos

In the early days of photography, creating an image was a complicated chemical process that required precise control over light-sensitive materials. Photographers used silver-based compounds, such as silver halides, to develop images on a variety of surfaces, including glass, metal, and paper. When exposed to light, these silver compounds would undergo a chemical reaction and form a visible image.

Despite their remarkable ability to capture detail, early photographs were highly susceptible to environmental damage. Over time, exposure to light, heat, and air caused the silver particles to oxidize, leading to fading and discoloration of the photographs.

To address this issue, photographers developed a technique known as “toning,” a process that involved treating photographic prints with chemical solutions both to enhance their color and to improve their longevity. Sepia toning, named after the ink from the cuttlefish species Sepia officinalis, became one of the most effective and widely adopted methods of toning.

This process replaced some of the sensitive metallic silver in a print with silver sulfide, a more stable compound that was less prone to oxidation and fading. The chemical transformation not only gave the photographs their characteristic warm, brownish hue but also extended their lifespan, making it possible to preserve images for generations to come in an era when photography was an expensive and time-consuming process.

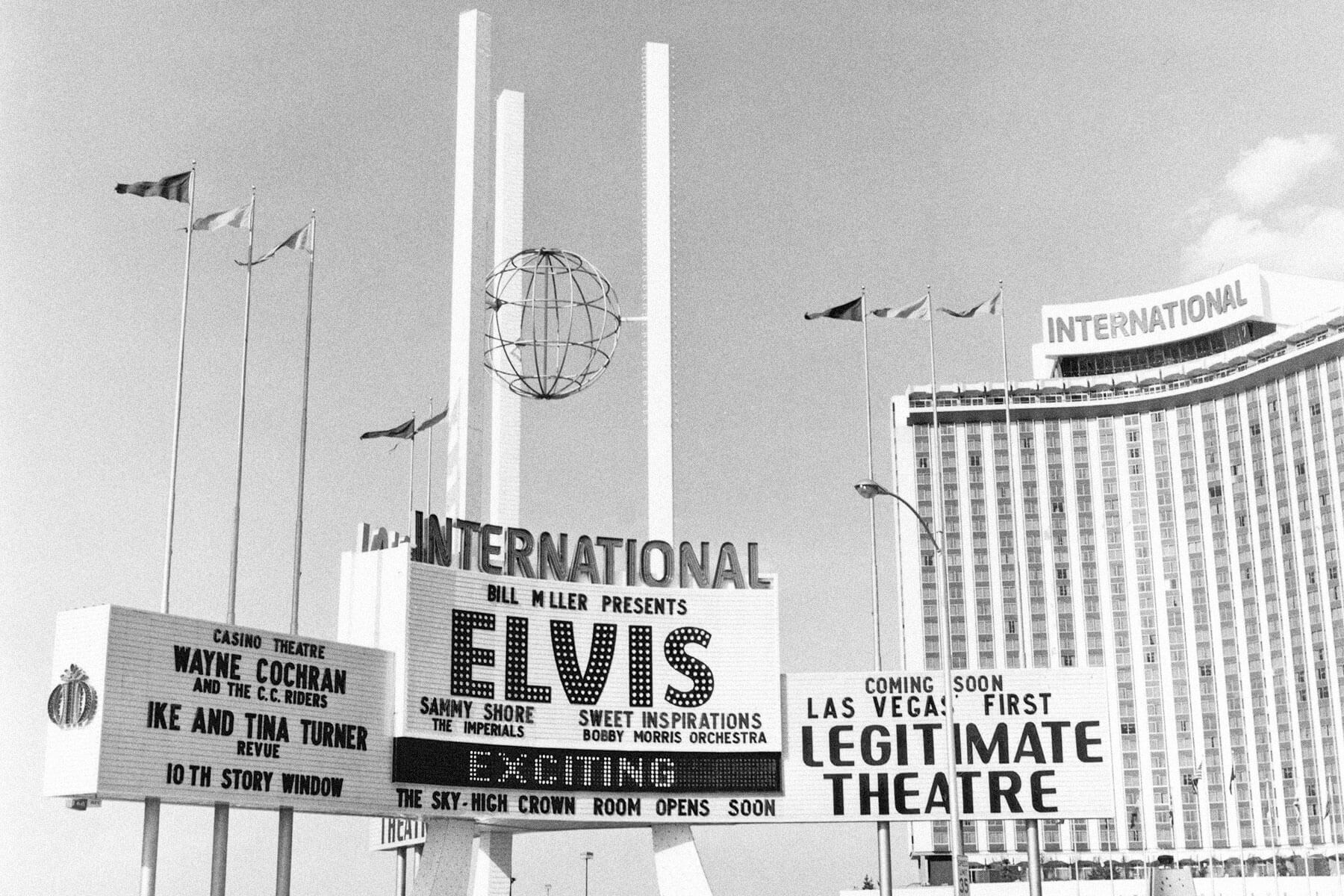

Sepia toning gained popularity in the 1880s as photographers experimented with ways to create prints that were visually appealing as well as long-lasting. In fact, sepia-toned photographs last up to 50% to 100% longer than black-and-white images. However, there was no universal formula for creating sepia-toned images, so each photographer had to develop their own chemical combination. This resulted in a variety of brown hues, ranging from light golden brown to dark reddish brown. The toning process remained in widespread use well into the 20th century, allowing countless photographs from that era to survive to the present day.