Michelangelo’s David was censored by Queen Victoria.

The statue of David is among Michelangelo’s greatest masterpieces, but the sculpture isn’t without detractors. One such critic was none other than Queen Victoria, who reigned over England from 1837 until 1901, centuries after the original David was sculpted in 1504. In 1857, a plaster replica of the David was shipped to Great Britain as a gift to Victoria from Leopold II, the grand duke of Tuscany. While the queen accepted the diplomatic gesture with grace, she was, according to some anecdotal reports, left aghast by the statue’s blatant nudity. The work was sent to be displayed at London’s Victoria and Albert Museum, where curators crafted a plaster fig leaf to obscure the reproductive organ that some found offensive. This leaf was attached with the help of “strategically placed hooks” that allowed it to be lowered in place in anticipation of any visits from female members of the royal family, as the statue otherwise remained uncensored. The leaf — a replica of which can still be found in the museum’s collection — was last used during the first half of the 20th century, leaving the replica of David fully nude as Michelangelo initially intended.

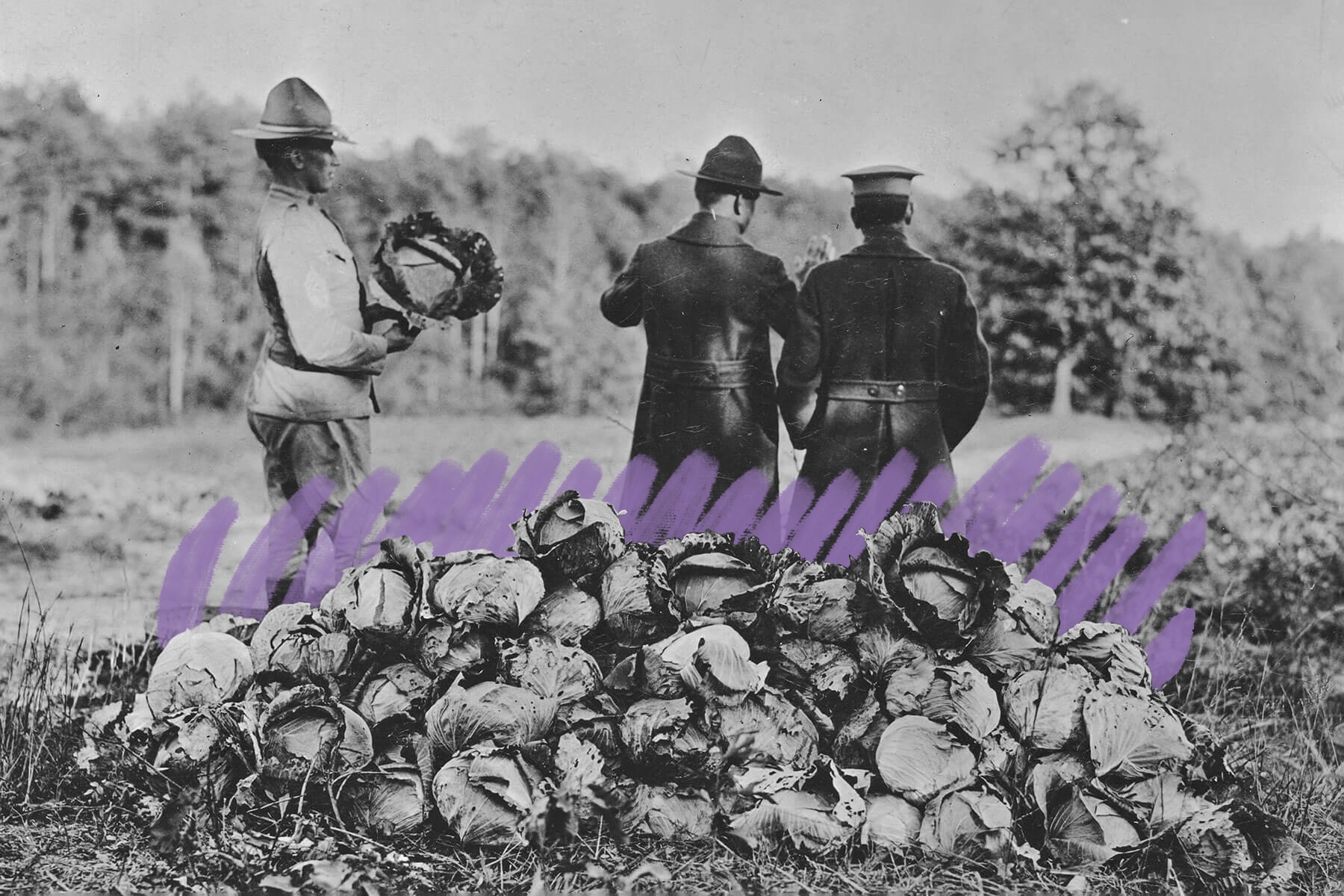

However, even the original David statue endured years of unceremonious censorship. Upon its unveiling in Florence in 1504, the sculpture was covered with a garland made of 28 copper leaves to cover its nakedness, and the garland remained around David’s waist until at least the mid-16th century. Concurrently, around the year 1541, the Vatican implemented a “Fig Leaf Campaign” to censor nudity in art that it deemed offensive. At first, the Catholic Church sought to cover up the naked figures in Michelangelo’s painting “The Last Judgment,” as well as similar works. Thankfully, many of these once-censored works of art have since been restored to their original condition.