4 Secrets of the CIA

The Central Intelligence Agency has its fingers in many pies, from counterterrorism to offensive cyber operations and covert paramilitary actions. The mere mention of the CIA brings with it a certain mystique, conjuring up images of secret agents, globe-trotting spies, and clandestine activities. It’s no surprise, then, that the agency has featured heavily in numerous Hollywood movies, from Spy Game and Zero Dark Thirty to The Bourne Identity and Bridge of Spies.

The CIA was formed in 1947 by President Harry Truman, partly as a replacement for the Office of Strategic Services (OSS), which was disbanded after World War II. As a civilian intelligence service and part of the U.S. Intelligence Community, it is officially tasked with gathering, processing, and analyzing national security information from around the world. Unlike the FBI, the CIA has no law enforcement function — it’s also not allowed to collect information regarding “U.S. Persons,” although the agency’s actions have often proven controversial in that regard.

Unsurprisingly, the CIA has kept — and uncovered — many secrets over the decades. Here are some of the most fascinating secrets from the agency’s history, from innovative spy techniques to daring covert missions.

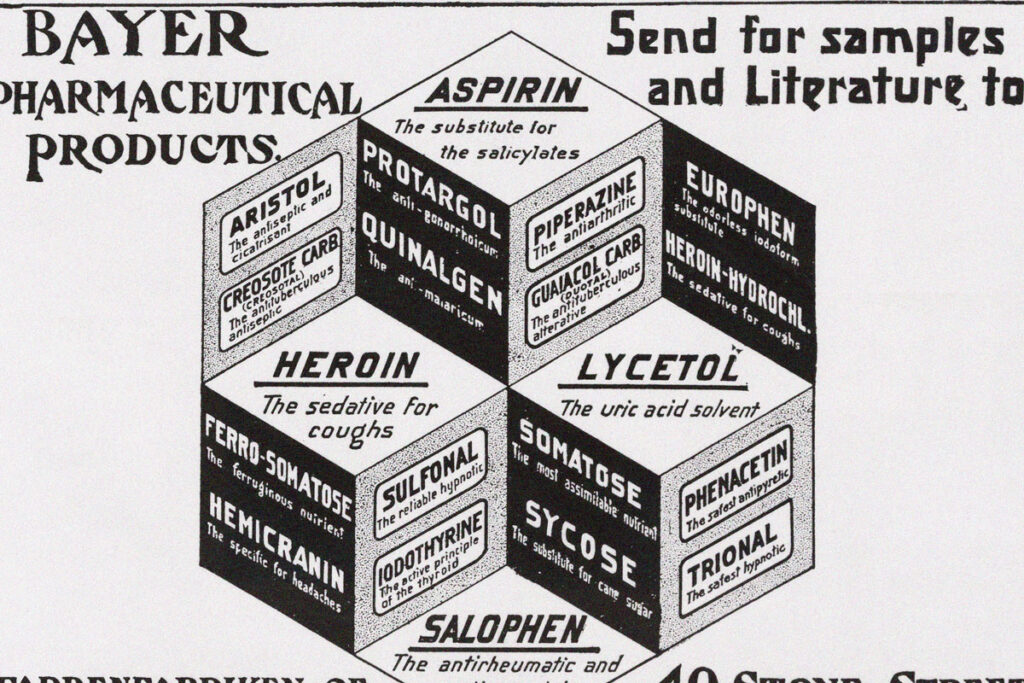

The CIA Had Plenty of Secret Gadgets

The CIA created a range of secret gadgets that could have been straight out of a James Bond movie. The extensive list of low- and high-tech trickery includes hollow silver dollars for holding messages or film; miniature compasses hidden in cufflinks; pigeon-mounted mini cameras; a listening device designed to look like tiger excrement; and a robot fish called Charlie that secretly collected water samples. Perhaps most impressive of all was the “insectothopter,” a tiny robotic dragonfly that could eavesdrop on otherwise inaudible conversations.

The CIA Used the Skyhook Before Bond and Batman

In 1962, the CIA launched a mission — code-named Project COLDFEET — to investigate an abandoned Soviet research station on a floating ice island in the Arctic. Getting there was easy enough: Two Navy pilots secretly parachuted down onto the ice and began their search for information. The tricky part was how to recover the pilots and the information they had retrieved, as it was impossible to land an aircraft on the ice. So, the CIA decided to use its new Fulton surface-to-air recovery system, colloquially known as the Skyhook. The agents on the ground deployed a helium balloon that lifted a 500-foot line into the air. A slow-moving B-17 plane, with the Skyhook device attached to its nose, then flew overhead and snagged the line with the agents attached to the end of it, sweeping them into the air, at which point they were winched aboard the aircraft. Sound familiar? You might have seen the Skyhook used later by James Bond in 1965’s Thunderball and Batman in 2008’s The Dark Knight.