Why Are Traffic Lights Red, Yellow, and Green?

For drivers in many parts of the globe, the red, yellow, and green sequence of traffic lights has become an instinctual part of how we move through the world. But why these three colors? How did they come to govern the movement of billions of people across the planet every day? As it turns out, there are a few factors in play, including the history of transport, color psychology, and basic physics.

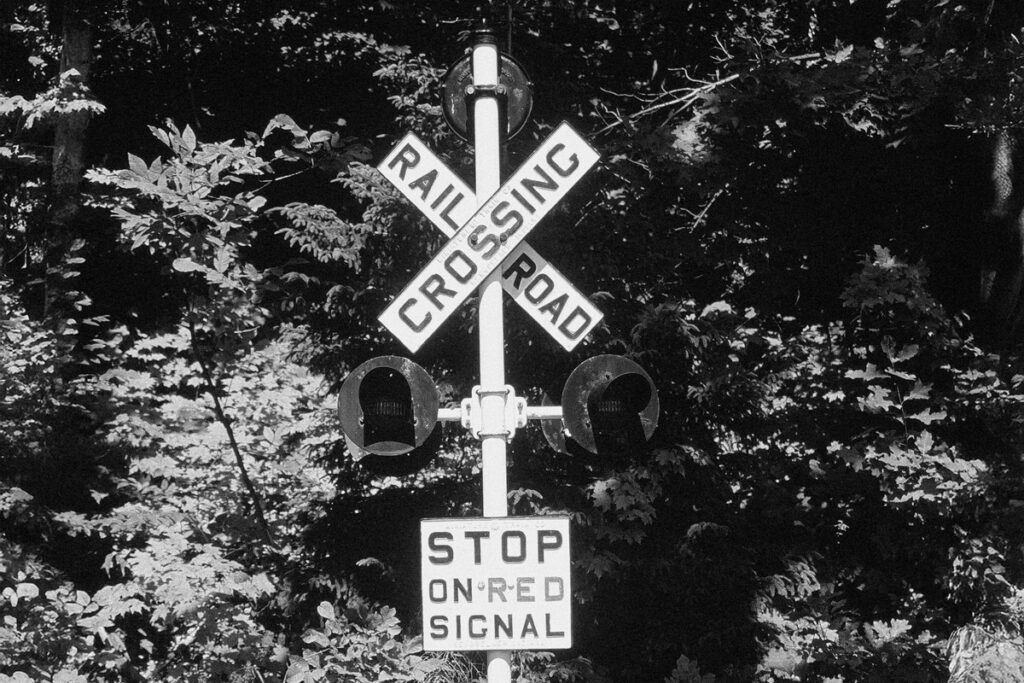

Red Lights and Railroads

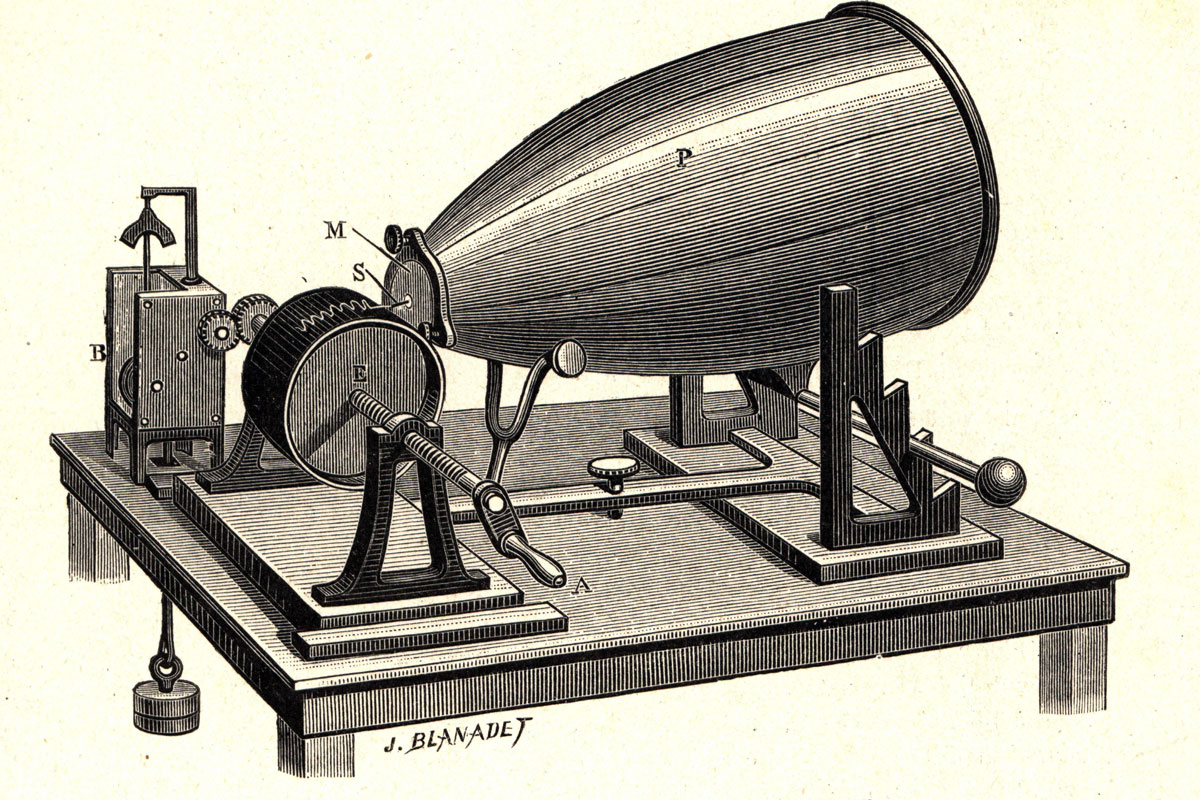

The colors used in traffic lights today were not invented for roads. They were largely inherited from the railway industry, which began developing light-based signaling systems in the 1830s (based on even older semaphore flagging). But in the early days of railroads, the colors were slightly different: While red was used to signal “stop,” white meant “go,” and green was the color used to indicate caution.

The use of red in the original system was never really in doubt. In the human psyche, red has an innate connection with warning and danger — a psychological and possibly biological response that might be due to the color’s association with blood and fire.

That alone makes red a natural choice for warning signs. But there’s also some physics in play. Red has the longest wavelength of any color in the visible light spectrum, making it visible over greater distances than many other colors. Red light also dissipates less in the atmosphere when compared to shorter-wavelength light.

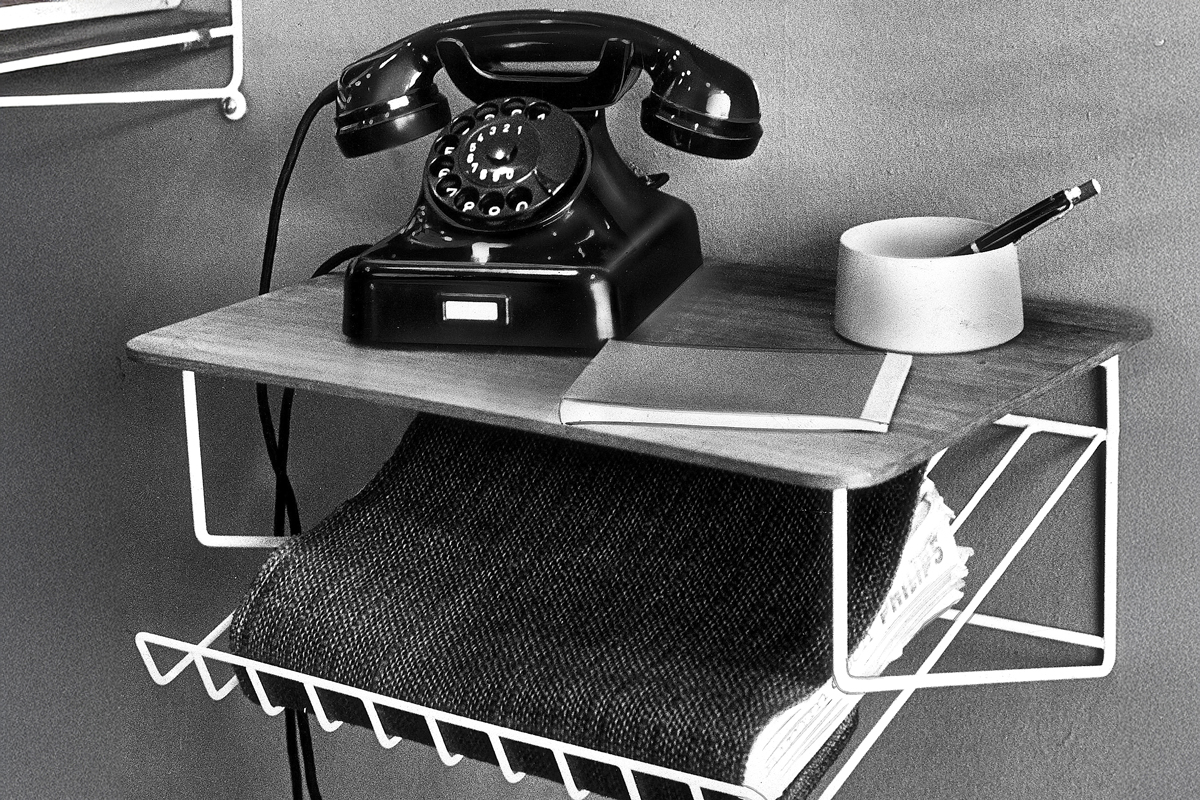

So, when a signal must be seen from as far away as possible — and remain visible in fog, rain, or at night — red is the perfect choice. The people in charge of developing railway signals were well aware of both the psychology and physics of the hue, and as it worked just fine for trains, it was later used as the color for “stop” in the world’s first mechanical, electric traffic lights, which appeared in Paris in 1923.