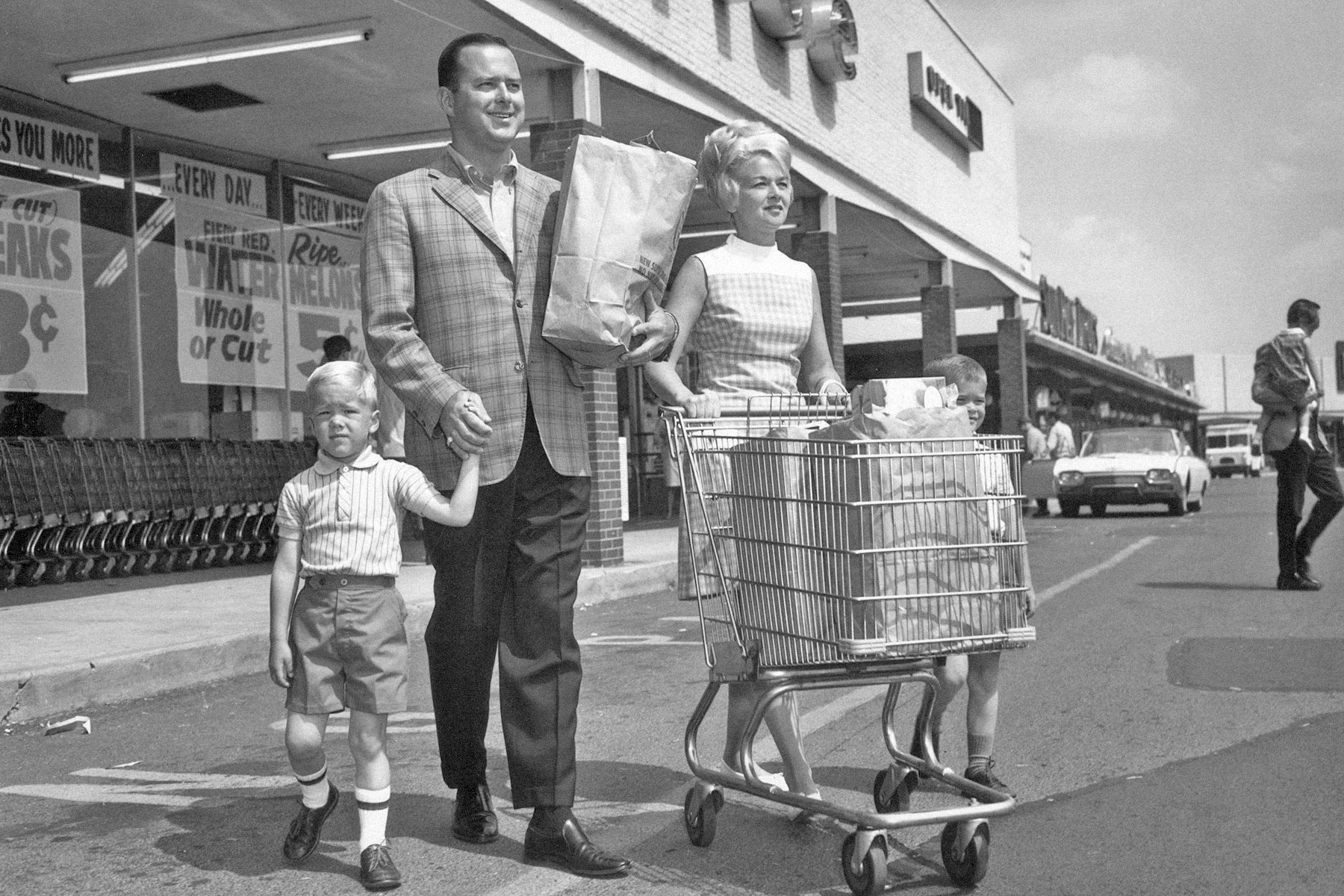

Life in 1960s America, By the Numbers

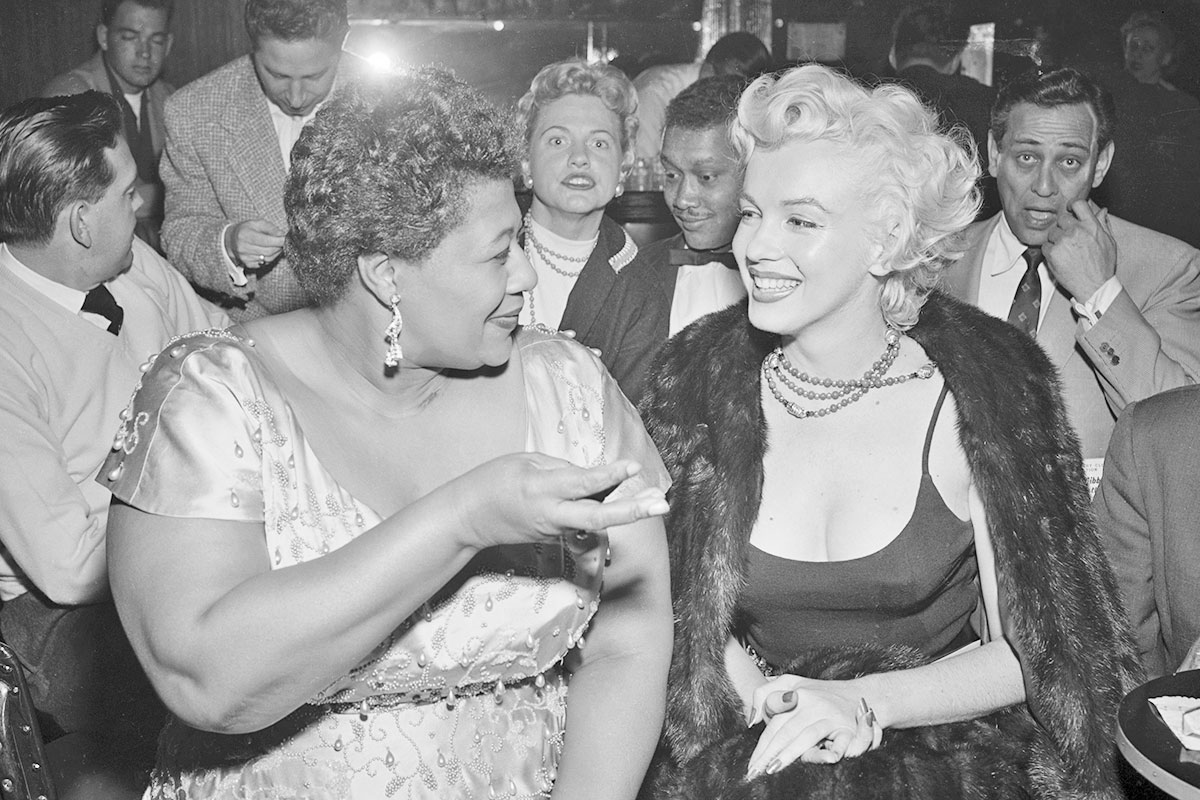

The 1960s were some of the most significant years in American history. The decade saw the Civil Rights Movement and a rising counterculture that reimagined the shape of the American social fabric. Pop music exploded like never before with the British Invasion led by the Beatles and Rolling Stones, but the ’60s were also an intense era of war and political violence.

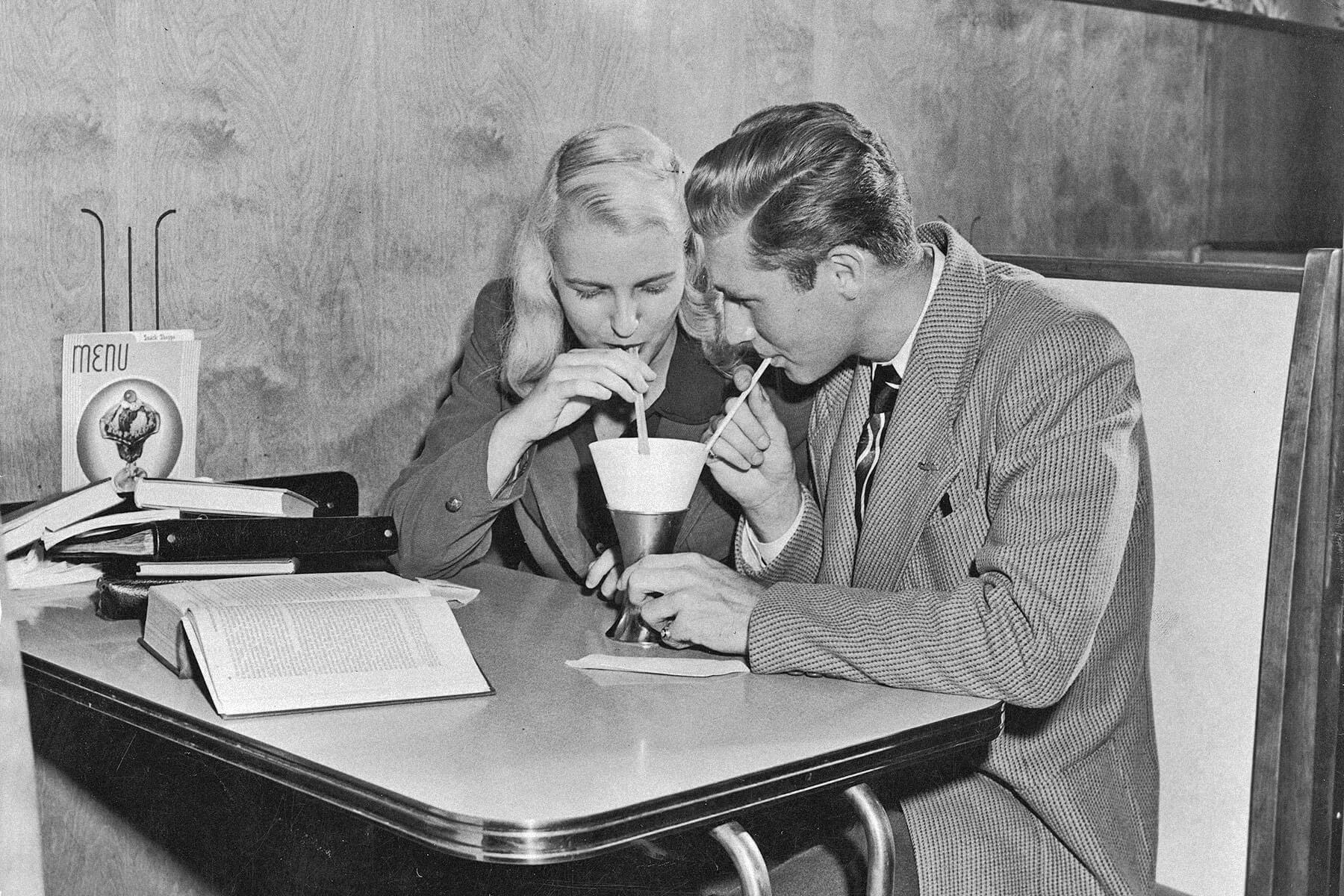

The decade’s most monumental moments tend to be widely covered, and the sheer number of historic events during this time almost create the impression that every moment was imbued with turbulence. But while the tumult of the decade played out on the evening news in homes across America, many people were still living normal everyday lives — albeit lives that looked quite different from our modern lifestyle. The following numbers offer a snapshot of day-to-day life in 1960s America.

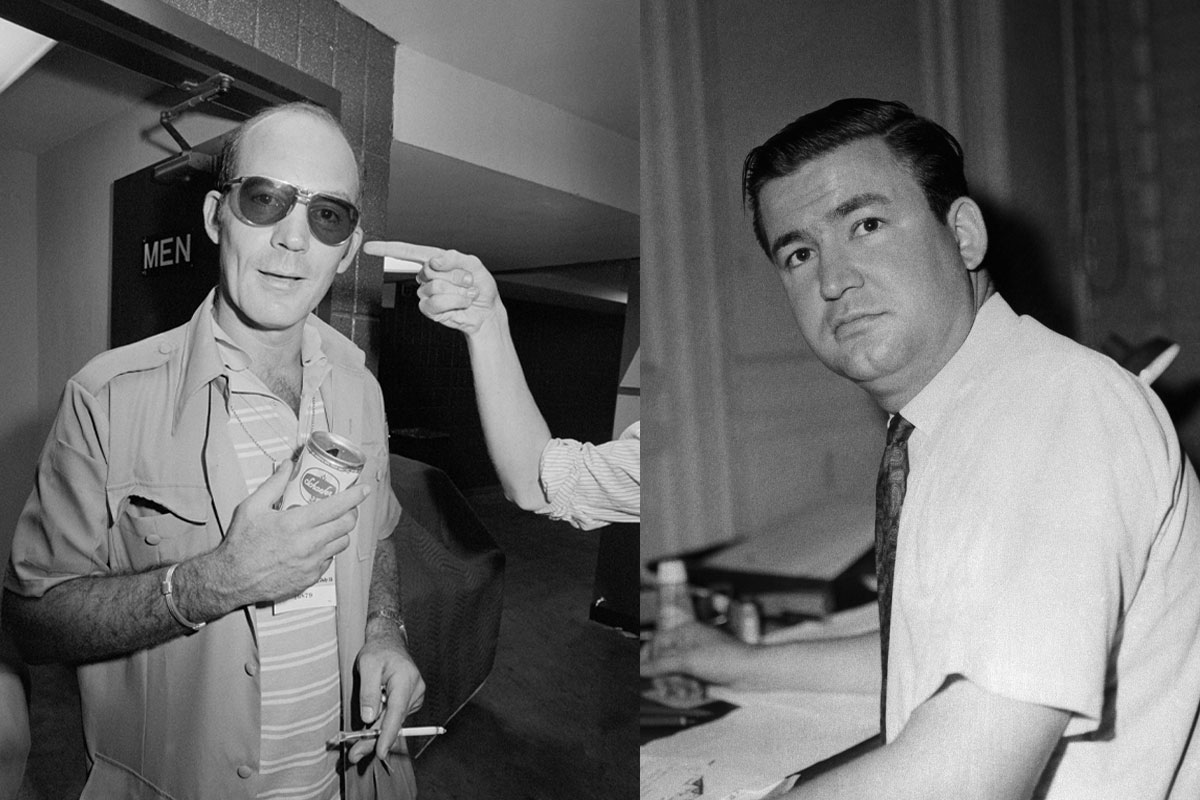

42% of Adults Were Smokers

Smoking was still widespread in the middle of the 20th century. The smoking rate in the U.S. reached a peak of 47% of adults (including 50% of doctors!) by the end of 1952. Though cigarette sales declined somewhat in 1953 and 1954 amid growing health concerns, the introduction of the filtered cigarette created a rebound. Through the early years of the 1960s, the smoking rate held steady at 42% of adults. On January 11, 1964, Surgeon General Luther L. Terry published the first report of the Surgeon General’s Advisory Committee on Smoking and Health, a landmark event that brought the link between smoking and disease front and center in the American consciousness. Smoking has been on an overall downward trend ever since: As of 2021, smoking has declined to 11.5% of adults.