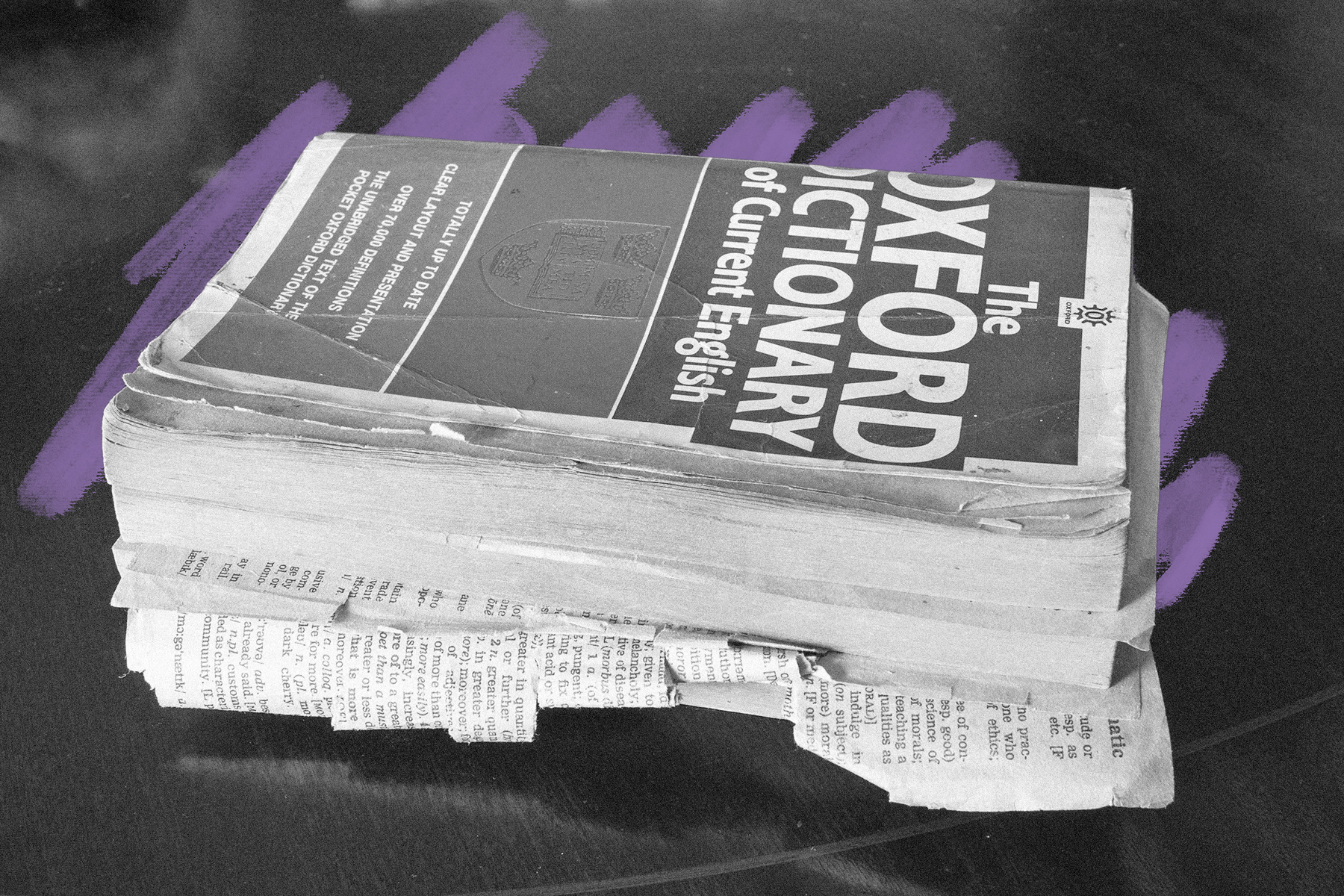

It took the Oxford English Dictionary editors five years just to reach the word ‘ant.’

The Oxford English Dictionary, also known as simply the OED, is a massive reference book containing not just words — including obsolete ones — and their definitions, but also detailed information on the words’ history and usage. The project was born in 1857, when the Philological Society of London, a group dedicated to the history of literature and words, established a committee to collect words that didn’t appear in existing dictionaries at the time. Their goal was to document the English language going back to Anglo-Saxon times, which ended around 1000 CE — so quite a lot of territory to cover.

Work started in earnest in 1879, after Oxford University Press signed on to finance and publish the dictionary, at the time called the New English Dictionary (NED). The staff buckled down and got to work reading and researching; editor James Murray estimated the dictionary would take about 10 years to compile. In 1884, after working on the dictionary for five years, the first fascicle (meaning a part of a book) came out. It only covered the words “a” through “ant.”

The project was clearly more ambitious than the Philological Society had originally imagined. Murray started working on the dictionary full time, and over the next several years he was joined by another editor and two co-editors. The last fascicle was published on April 19, 1928, nearly 50 years after work began. The original plan was for the dictionary to contain 6,400 pages over four volumes; ultimately, the first edition of the NED contained 12 volumes with 15,487 pages, covering a whopping 414,825 words. Today, the OED continues to document the growing English language, and includes more than 600,000 entries, with new words and meanings added regularly.