More than 60 nations signed a pact to outlaw war after WWI.

International relations changed dramatically after World War I. Public opinion swayed heavily against war, and peacekeeping measures were at the top of political agendas. One of the most ambitious attempts at deterring future conflict was signed on August 27, 1928: the Kellogg-Briand Pact. It outlawed war between the nations that signed, including France, the United States, the United Kingdom, Germany, Japan, Italy, Belgium, Poland, Czechoslovakia, Ireland, Canada, Australia, New Zealand, South Africa, and India.

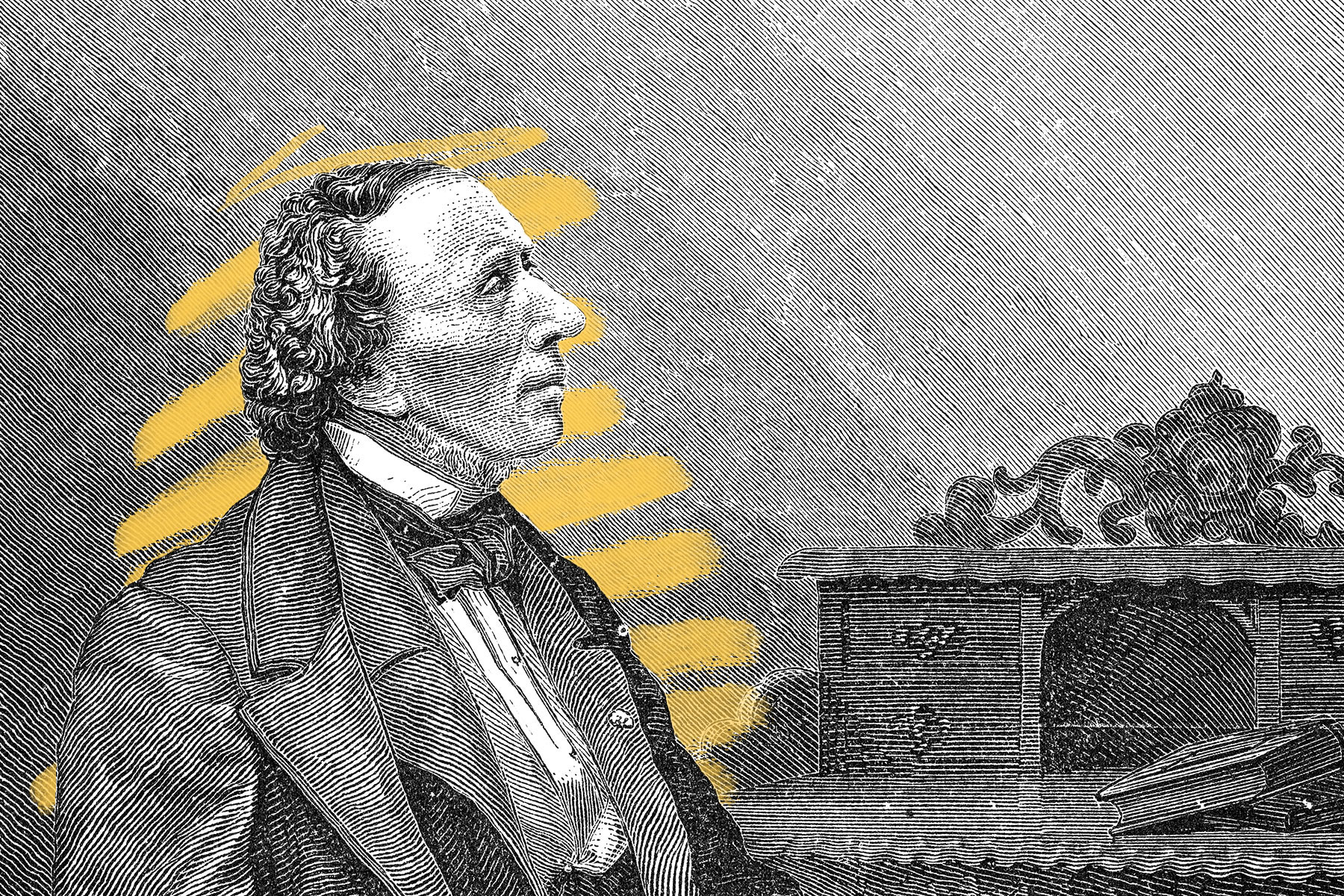

The pact was named after U.S. Secretary of State Frank B. Kellogg and French Minister of Foreign Affairs Aristide Briand, who spearheaded the act. Briand had proposed a bilateral agreement between France and the U.S. to deter aggression (especially from Germany), but eventually other countries were invited to join the alliance. The response was overwhelming. The world was eager to maintain peace, and the pact was signed by 15 nations initially, followed by 47 additional countries in the following years. Signatories of the “Paris Pact” (the act’s nickname) were to renounce war as a national policy and settle disputes by peaceful means. While acts of aggression were outlawed, self-defense was permitted, which led to the pact’s eventual downfall. What’s more, there weren’t any real legal consequences for violations, and nations soon began justifying international aggression as self-defense, and without any repercussions, beginning with the Japanese invasion of Manchuria, China, in 1931. Although the pact failed to prevent World War II, it remains in effect today, and its goals are still relevant. In 1945, following the end of the war, key ideas such as renouncing the use of war and promoting peaceful solutions were incorporated into the United Nations Charter, which was signed by many of the same signatories as the Kellogg-Briand Pact.