WWII soldiers carried emergency chocolate that tasted intentionally bad.

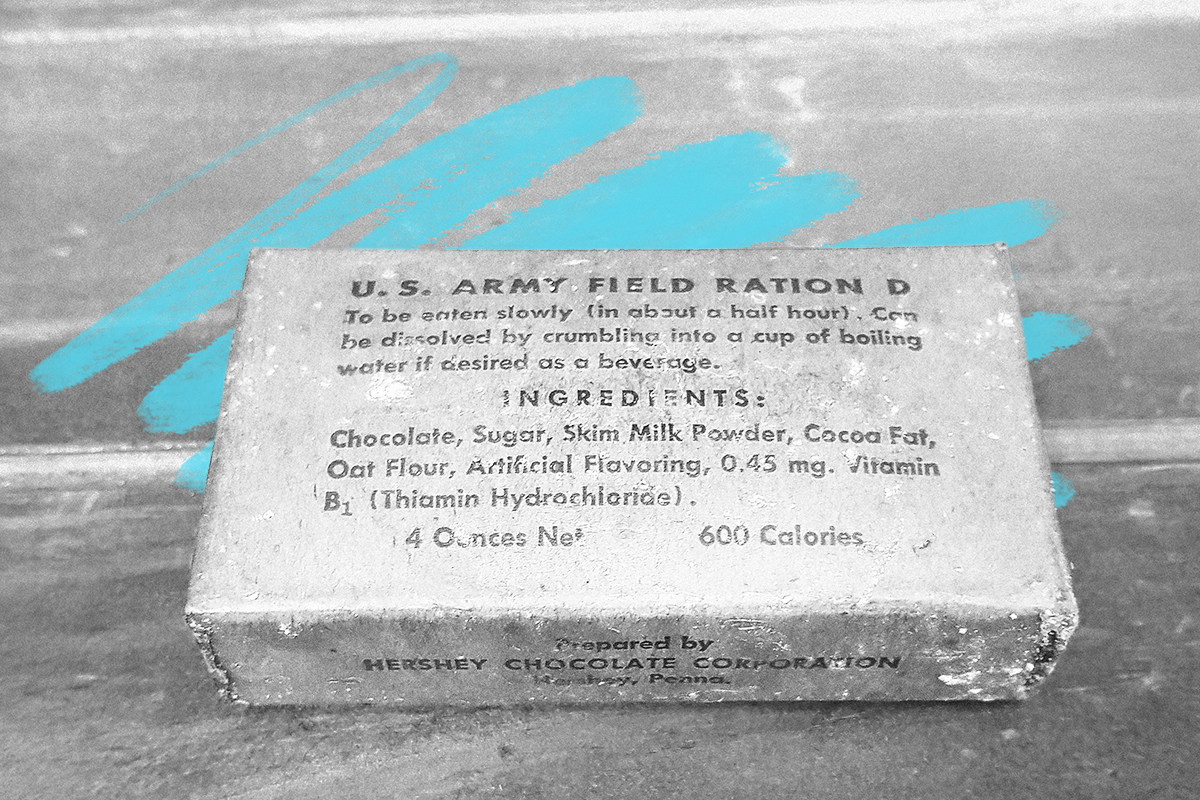

In 1937, Captain Paul Logan of the U.S. Army Quartermaster General’s office visited the Hershey Chocolate Corporation with the mission of creating a chocolate bar for use as an emergency ration for soldiers. Among the requirements, this bar needed to supply a hearty dose of energy and have a higher melting point than normal chocolate, so as to remain solid in sweltering conditions. The bar was also meant to taste “a little better than a boiled potato,” in Logan’s words, meaning it had to be unappetizing enough to prevent soldiers from scarfing down the ration when no emergency was afoot.

Following those instructions wasn’t as simple as it sounds. Hershey chemist Sam Hinkle came up with a suitable formula of chocolate liquor, skim milk powder, cocoa butter, oat flour, vanillin, and barely enough sugar, but the resulting paste-like mix was too thick to be poured into molds and challenging to extract after setting. However, Hershey was eventually able to churn out 100,000 of what became known as “D ration” bars per day by 1939, and by the end of 1945, production for these and other military-designated chocolates was up to around 24 million units per week.

As for the order to ratchet up the unappetizing quotient, it’s safe to designate this mission accomplished. The dense ration bars were difficult to bite into with their high melting point, and the lower sugar level meant taste buds were overwhelmed by the bitterness of dark chocolate. As a result, these unappealing bricks were typically saved for emergencies as intended, but there turned out to be an unforeseen use for them as well. With communities across war-torn Europe sometimes desperate for any kind of food, U.S. regiments found they could win over suspicious locals by handing out the chocolate rations.